AI That Sales Teams Actually Trust.

Your Data. Your LLM. Your Terms. Zaon curates and verifies your sales knowledge to train team-specific LLMs — so every rep has instant, accurate answers that win deals. Securely deployed in your cloud.

Your Data. Your LLM. Your Terms. Zaon curates and verifies your sales knowledge to train team-specific LLMs — so every rep has instant, accurate answers that win deals. Securely deployed in your cloud.

Generic LLMs hallucinate. They don't know your data. They can't be trusted with sensitive decisions.

Generic LLMs fabricate facts, invent sources, and present fiction as truth with complete confidence.

Sending proprietary data to external AI providers creates compliance risks and security vulnerabilities.

Your Sales, Legal, and HR teams need AI trained on relevant data—not generic internet knowledge.

Turn fragmented knowledge into shared understanding. Zaon transforms your enterprise data into curated, team-specific LLMs—automatically.

Connect and vectorize enterprise knowledge from SharePoint, OneDrive, Google Drive, Box, SQL, Databricks, Salesforce, PDFs & Docs into a team-specific knowledge base.

Our Human-in-the-loop Truth Curation™ engine provides three reinforcing pillars: Knowledge Verification (expert review), Continuous Feedback Loop, and Contextual Intelligence—ensuring only accurate, up-to-date data enters your system.

Proprietary process using Pre-Training, Mid-Training, Supervised Fine-Tuning, and Reinforcement Learning to generate a custom LLM trained on your curated data.

Deploy custom agents for each team—Sales, HR, Legal, Engineering—each pointing to a custom LLM trained on your team's own data.

Access your custom agent and LLM from any MCP-capable platform including OpenAI, CoPilot, Claude, Azure, and Google.

Continuously monitor data sources and automatically restart the cycle. Training and redeployment happen automatically to keep your team LLM and agents current.

Seamlessly integrate with the data sources your teams already use.

Train specialized AI assistants for each department, each with access only to relevant, verified data.

AI trained on your CRM, proposals, win/loss data, and competitive intelligence.

AI trained on brand guidelines, campaign data, and messaging frameworks.

AI trained on policies, handbooks, benefits, and onboarding materials.

AI trained on contracts, compliance documents, and legal precedents.

AI trained on codebases, documentation, architecture decisions, and runbooks.

AI trained on financial reports, forecasts, and accounting policies.

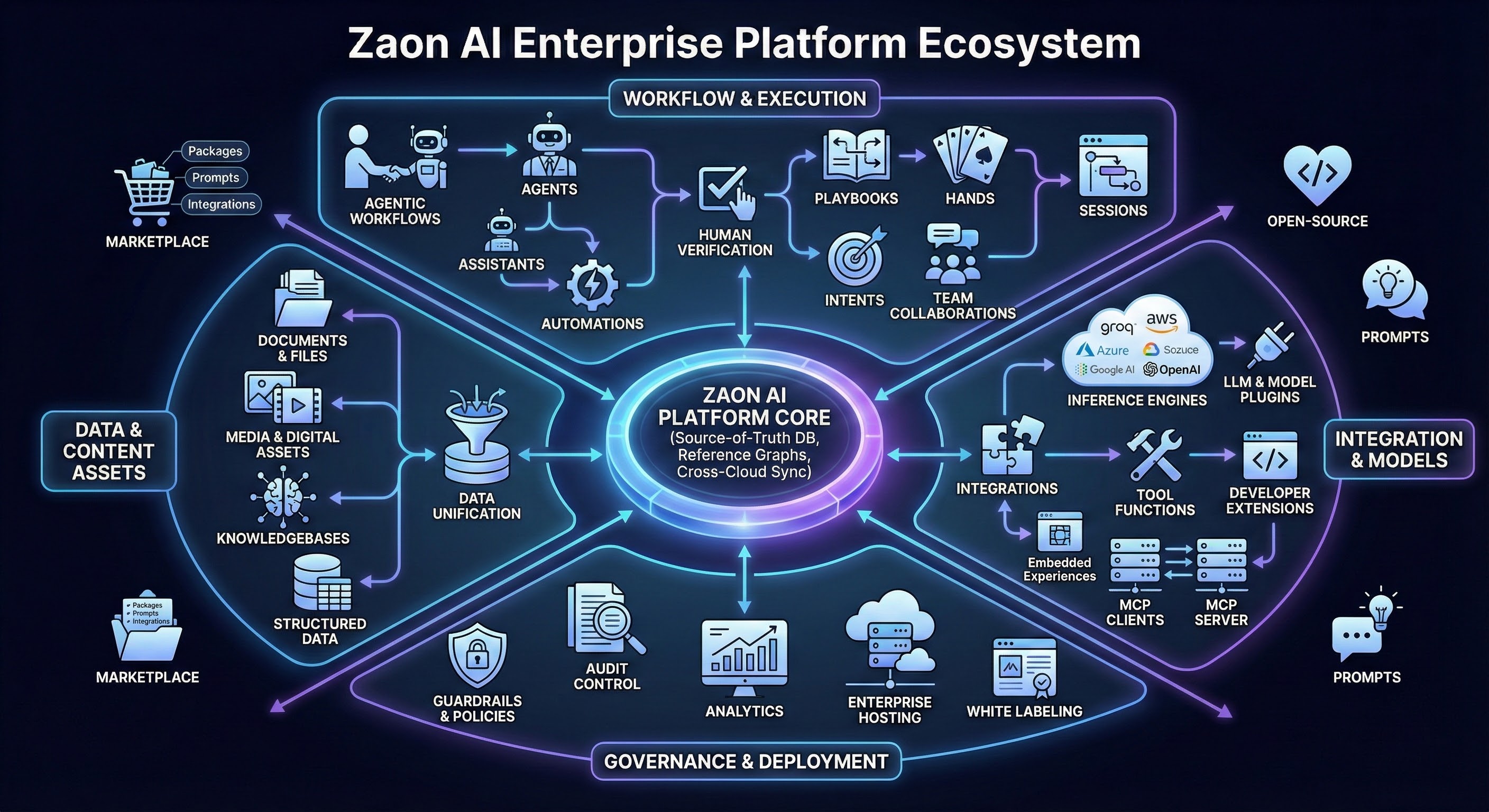

Everything you need to scale AI responsibly while maintaining control, security, and operational clarity.

Empowered managers that oversee assistants, tools, and automations—reporting to humans and validating AI work product.

Perform tasks on behalf of agents and humans—trigger automations, respond on Slack, manage emails, and more.

Create trigger-based workflows with custom rules and conditions—generate reports, stream data, and automate processes.

Ready-to-use templates with all prompts, assets, workflows, and integrations needed to get complex work done fast.

Multi-agent workflows with branching, conditions, and human-in-the-loop—dynamically created and modified.

Hundreds of pre-built tool functions for agents and assistants—plus build your own custom integrations.

Curated collection of tested prompts for common tasks—version controlled, shareable, and optimized for your business context.

Automated knowledge capture from humans and AI—synced and exported to existing enterprise solutions.

Collect assets and actions from disparate AI solutions into a single source-of-truth database.

Upload, analyze, and automate documents—assistants can review, revise, and create new iterations.

Integrate, create, and vectorize structured data—transform between models and integrations.

Complete semantic graphing of work product and data—vectorized to empower AI efficiency.

Support for images, audio, and video—analyze, modify, generate, and store media content.

Custom integrations in any language—email, Slack, data lakes, analytics, or any enterprise need.

Fully compliant Model Context Protocol—integrate with any authenticated MCP clients and hosts.

100% API-driven with SDKs in multiple languages—build custom integrations with LOB software.

Hundreds of LLM and foundational models supported (or bring your own)—enable specific models for different employee groups.

Community-driven (or private) gallery of prompts, agents, assistants, workflows, integrations, and playbooks—free and paid.

Rebrand and customize the platform—source code provided for deep LOB application integration.

Advanced corporate policies for AI safety and compliance—sits over AWS Bedrock and Azure Content Safety.

Configurable approval chains for AI-generated content—designed to fit existing enterprise workflows.

View and export access and usage logs—create alerts and rules for specific conditions.

Unified statistics from all LLMs and models—dashboards and streaming to data analytics platforms.

Integrates with your directory, teams, roles, and permissions—enables collaboration between employees and AI.

Synchronize actions and assets between cloud and on-prem AI platforms—unified source of truth.

Zaon is deployed entirely within your private cloud infrastructure. Your data stays yours—period.

Zaon installs in your preferred cloud or on-premises infrastructure with Terraform automation.

See how Zaon can transform your enterprise data into reliable, team-specific LLMs.